How to Build an Underwriting API: Integration Architecture Guide

A practical guide to building underwriting APIs for life insurance, covering integration architecture, data standards, and real-world implementation patterns.

Building an underwriting API integration architecture is one of those projects that sounds straightforward on a whiteboard and then gets complicated the moment you touch a production system. Most carriers are running policy administration platforms built in the early 2000s — sometimes older — and the idea of wrapping modern RESTful APIs around these systems involves more translation layers than anyone budgets for. But carriers who have pulled it off are seeing real results: faster time-to-decision, fewer manual handoffs, and underwriting workflows that can actually talk to external data sources without someone exporting a CSV.

"The modernization of insurance analytics hinges on re-architecting legacy systems into modular, cloud-native ecosystems that support rapid experimentation and scalable data-driven decision making." — DZone, Cloud-Native Microservice Architectures for Insurance (2025)

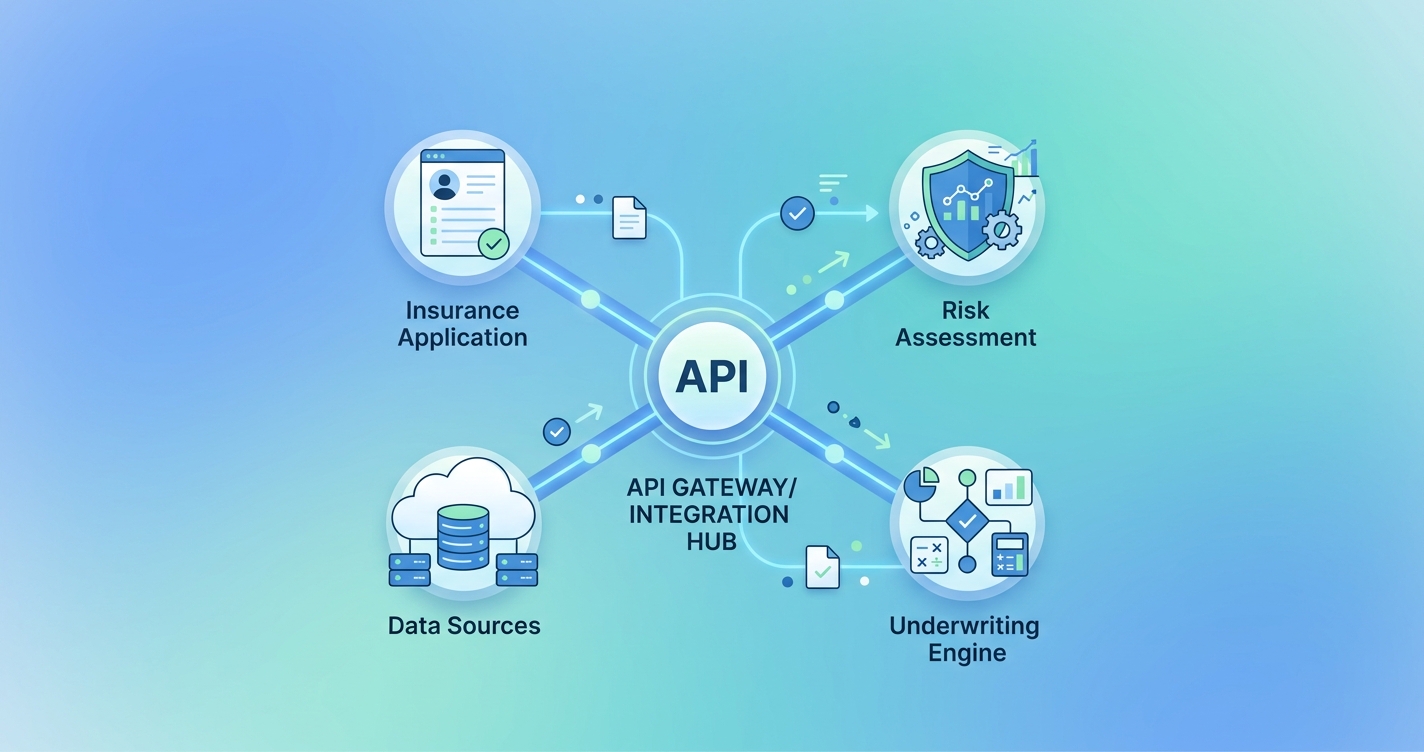

What an underwriting API architecture actually looks like

The phrase "underwriting API" gets thrown around loosely. In practice, it means a collection of services — not a single endpoint — that handle different parts of the underwriting process. A submission comes in through one service. Medical data gets pulled from another. Risk scoring runs through a third. The decision gets assembled from all of these pieces and pushed back to the policy administration system.

A paper published in the World Journal of Advanced Engineering Technology and Sciences (Akinkunmi et al., 2025) analyzed API integration patterns across major insurance platforms and found that carriers using microservices-based API architectures achieved straight-through processing rates of up to 70%, compared to 15-25% for carriers relying on monolithic integrations.

Here's what a typical architecture looks like when you break it down:

| Component | Function | Common Standards | Integration Pattern |

|---|---|---|---|

| Submission intake API | Receives applications from agents, portals, embedded channels | ACORD XML/JSON, REST | Synchronous |

| Data enrichment layer | Pulls MIB, Rx history, MVR, credit, EHR | FHIR R4, HL7v2, proprietary | Asynchronous, event-driven |

| Rules engine API | Applies underwriting guidelines, triage logic | Internal rules, vendor SDKs | Synchronous |

| Risk scoring service | Runs predictive models on aggregated data | ML model serving (REST/gRPC) | Synchronous or async |

| Decision orchestrator | Assembles final underwriting decision | Internal | Event-driven, saga pattern |

| Policy admin connector | Pushes decisions back to PAS | ACORD, proprietary adapters | Async with retry |

The key insight from carriers who have done this well: don't try to replace your policy administration system. Build APIs around it. The PAS becomes one node in a larger mesh, not the center of the universe.

The data standards problem (and why it's worse than you think)

Insurance has a standards body — ACORD — that has been working on data standards for decades. Their 2025 Member Report, released in March 2026, showed increased engagement from member companies and new dedicated departments for research, education, and advocacy around digital-first strategies. ACORD's Electronic Health Records Standard creates a lightweight vehicle for medical information inputs optimized for life insurance underwriting.

But here's the thing: ACORD adoption is uneven. Large carriers generally support ACORD XML for submissions. Smaller MGAs and insurtechs often skip it entirely and use proprietary JSON schemas. And on the healthcare side, FHIR R4 is increasingly the standard for electronic health record access, but the overlap between what FHIR exposes and what underwriters actually need isn't clean. A FHIR Patient resource gives you demographics. A FHIR Condition resource gives you diagnoses. But underwriters want a synthesized risk picture, which means someone still has to build the translation layer between raw FHIR bundles and underwriting-relevant data structures.

According to 1Up Health's 2026 healthcare interoperability predictions, the industry is moving toward using FHIR data standards for much richer clinical context in claims and risk assessment. But "moving toward" is doing a lot of work in that sentence. Most carriers are still dealing with a patchwork of HL7v2 feeds, flat files from third-party data vendors, and the occasional FHIR endpoint from a health system that's ahead of the curve.

What this means for your API design

Your data enrichment layer needs to be adapter-based. Each external data source gets its own adapter that normalizes the response into your internal schema. When a new data source comes online — or an existing one changes its format — you swap the adapter without touching the orchestration logic. This sounds like basic software engineering, and it is, but the number of insurance platforms that have data source formats hardcoded into their core underwriting logic is staggering.

Microservices vs. monolith: what actually works in insurance

The insurance industry had its microservices moment around 2022-2023, and the results have been mixed enough to be instructive. A case study from NthBit documented an insuretech platform migration from monolithic architecture to microservices, focusing on decoupling business logic into discrete, independently scalable services with an API-driven frontend-backend separation.

The carriers that succeeded with microservices shared a few traits. They started small — usually with a single underwriting function like data enrichment or document triage — and expanded outward. They invested heavily in API gateway infrastructure and service mesh tooling before they started splitting services. And they had engineering leadership that understood this was a multi-year transformation, not a six-month project.

The ones that struggled tried to decompose everything at once. They ended up with dozens of tiny services that all needed to talk to each other synchronously, which created latency problems worse than the monolith they replaced. Distributed monoliths, as the industry calls them.

For most carriers, the practical answer is somewhere in between: a modular monolith or a small number of well-defined services rather than full microservice decomposition. Your submission intake, data enrichment, and risk scoring can be separate services. Your rules engine and decision logic probably shouldn't be split into 15 microservices.

The API gateway question

Every underwriting API architecture needs a gateway layer. This is where you handle authentication, rate limiting, request routing, and — critically for insurance — audit logging. Regulatory requirements mean every data access and every underwriting decision needs a paper trail. Your API gateway is the natural place to capture this.

EPAM's research on API-first mindset in underwriting portal development emphasizes that the ability to design portals with appropriate architecture, workflow, data mapping, and APIs is what separates leading insurance products from the rest. The gateway isn't just infrastructure plumbing. It's the control plane for your entire underwriting data flow.

Security and compliance considerations

Insurance APIs handle some of the most sensitive personal data in any industry: medical records, financial information, prescription histories. The security architecture isn't optional and it isn't a phase-two concern.

According to VRCIS's analysis of secure API integration for insurance portals, the core requirements include:

- OAuth 2.0 / OpenID Connect for authentication and authorization across services

- Mutual TLS for service-to-service communication within the underwriting mesh

- Field-level encryption for PHI and PII that traverses multiple services

- Consent management APIs that track and enforce applicant data sharing permissions

- Comprehensive audit logging at the API gateway and service level

State insurance regulations add another layer. Different states have different requirements for how long underwriting data must be retained, who can access it, and how applicants must be notified about data use. Your API architecture needs to handle this variability without requiring per-state code branches. Configuration-driven compliance rules, surfaced through an internal compliance API, is the pattern that scales.

| Security Layer | Implementation | Regulatory Driver |

|---|---|---|

| Transport encryption | Mutual TLS, TLS 1.3 | State data protection laws, HIPAA |

| Authentication | OAuth 2.0 + OIDC | NAIC model regulations |

| Authorization | Attribute-based access control (ABAC) | Minimum necessary standard |

| Data encryption at rest | AES-256, envelope encryption | State breach notification laws |

| Audit trail | Immutable event log per API call | Market conduct exam requirements |

| Consent tracking | Consent management microservice | FCRA, state privacy laws |

Real-world integration patterns that work

Pattern 1: Event-driven data enrichment

Instead of synchronously calling 15 data sources when a submission comes in, publish a "new submission" event to a message broker. Individual data enrichment services subscribe to this event, fetch their respective data, and publish enrichment results back. An aggregator service assembles the complete picture once all sources have responded (or timed out). This pattern handles the reality that some data sources respond in milliseconds (credit checks) and others take minutes or hours (APS requests from physicians).

Pattern 2: The saga pattern for underwriting decisions

Underwriting decisions often span multiple steps that can partially fail. An applicant might pass medical screening but get flagged for financial review. The saga pattern — where each step in the underwriting process is a discrete transaction with compensation logic if a later step fails — handles this cleanly. If the financial review requires re-scoring the medical data, the saga coordinator can orchestrate the rollback and retry without manual intervention.

Pattern 3: API versioning with backward compatibility

Underwriting rules change frequently. New data sources get added. Regulatory requirements shift. Your API contracts need to handle versioning without breaking existing integrations. The carriers doing this well use semantic versioning on their APIs, maintain at least two major versions simultaneously, and use feature flags rather than version-specific endpoints for incremental changes.

Current research and evidence

The research on insurance API modernization has matured considerably. Damco Solutions' analysis of API integration in insurance found that APIs connecting core systems — policy administration, underwriting, billing, claims, and CRM — produce the most value when they enable real-time data exchange rather than batch synchronization. This seems obvious, but many carriers are still running nightly batch jobs to sync underwriting data with their policy admin systems.

Thoughtworks' enterprise modernization research for insurers specifically recommends architecture-led modernization over big-bang replacement. Their finding: carriers that start with API wrappers around existing systems and gradually extract functionality into modern services have higher success rates than those attempting full platform replacements.

Walnut Insurance's compilation of best practices for insurance API implementation highlights modular architecture as the foundation. Individual API components scaling independently to meet varying demand optimizes resource usage and prevents the cascading failures that plagued earlier SOA implementations in insurance.

The future of underwriting API architecture

The next wave isn't just about connecting existing systems more efficiently. It's about APIs enabling entirely new underwriting capabilities.

Contactless health screening is one example. Companies like Circadify are developing camera-based vital sign measurement using rPPG technology that can capture heart rate, respiratory rate, and other biometric data through a smartphone camera. In an API-first underwriting architecture, integrating a new biometric data source like this is an adapter configuration change, not a system redesign. The enrichment layer picks up the new data type, the rules engine adds scoring criteria, and the decision orchestrator factors it in — all through existing API contracts. That's the whole point of building this way.

FHIR adoption will continue broadening the range of health data available through standardized APIs. ACORD's ongoing work on lightweight EHR standards specifically for insurance creates another convergence point. The carriers building flexible, adapter-based API architectures today are positioning themselves to plug into these standards as they mature, without rearchitecting.

Frequently Asked Questions

How long does it take to build an underwriting API integration?

It depends heavily on the starting point. Carriers with relatively modern policy admin systems can build an API wrapper layer in 3-6 months. Full microservices decomposition of underwriting workflows typically takes 18-24 months. Most carriers take an incremental approach, starting with the data enrichment layer (usually the highest-ROI component) and expanding from there.

Should we build or buy our API integration layer?

For core underwriting logic — rules engines, decision orchestration — most carriers find that building internally or heavily customizing vendor platforms is necessary. The underwriting rules are your competitive advantage and they change too frequently to be locked into a vendor's release cycle. For commodity functions like data enrichment adapters, API gateway infrastructure, and audit logging, buying or using managed services usually makes more sense.

What's the difference between ACORD and FHIR in underwriting APIs?

ACORD standards are insurance-specific. They define data schemas for insurance transactions — submissions, policies, claims. FHIR is a healthcare interoperability standard that defines how clinical data (diagnoses, medications, lab results) gets exchanged between systems. A modern underwriting API needs both: ACORD for the insurance workflow and FHIR (or similar) for accessing health data. The translation between them happens in your data enrichment layer.

How do we handle API versioning when underwriting rules change quarterly?

Separate your API contract versions from your rule versions. The API defines the shape of requests and responses. The rules engine behind the API can update independently. Use feature flags or configuration-driven rule sets rather than deploying new API versions for every rule change. Reserve major API version increments for breaking changes to the data contract itself.

Building an underwriting API architecture is a commitment. It's not the kind of project where you can ship a prototype and iterate in production — not with the regulatory and data sensitivity constraints insurance carries. But the carriers that have made the investment are operating in a fundamentally different way than those still routing everything through manual queues and batch processes. The gap between those two groups is widening, and the integration architecture you choose today determines which side you end up on.